We all know that \(\log(a\cdot{}b\cdot{}c)=\log{a}+\log{b}+\log{c}\). But when does

\[\log{(a+b+c)}=\log{a}+\log{b}+\log{c}?\]

Sometimes this is true! Take for example the rather elegant identity

\[\log{(1+2+3)}=\log{1}+\log{2}+\log{3}.\]

However, at least when looking for positive integer solutions, there seem to be disappointingly few other cases that work — none in fact. But what if we allowed for more integers? Or negative integers? Or complex integers?! In this post we will take a take a brief tour through proof by induction, number theory, complex analysis, and the Gaussian integers, before eventually finding infinitely more solutions to this innocuous looking problem!

The motivation for this post comes from Matt Parker’s recent video, that introduces and explains some of the basic properties of the logarithm, while also inspiring the spirit of mathematical exploration:

In typical Matt Parker style, after much discussions and elaborations, he converged on a puzzle, which we will now investigate.

The puzzle

Given \(n\geq{}2\), how many sets of non-zero integers \(\{x_1,\ldots,x_n\}\) satisfy

\[\log{\left(x_1+\ldots{}+x_n\right)}=\log{x_1}+\ldots{}+\log{x_n}?\]

This is nothing but a slightly more general statement of the original problem, allowing us to consider \(n\) integers rather than just \(3\). Technically speaking, we really mean multisets of integers, since we will allow the same integer to be used several times (e.g. the `set’ \(\{2,2\}\) is allowed).

The solution (over the positive integers)

To get us going we will add an additional restriction: that the integers are positive. The reason for doing this is because the logarithm isn’t conventionally defined for non-positive numbers — don’t worry, we’ll consider negative numbers soon. As explained in the video, under this restriction the puzzle is equivalent to finding sets of integers such that

\[x_1+\ldots{}+x_n=x_1\cdot{}\ldots{}\cdot{}x_n.\]

This follows from the multiplicative property of the logarithm:

\[

\log{x_1}+\ldots{}+\log{x_n}=\log{(x_1\cdot{}\ldots{}\cdot{}x_n)}.

\]

This implies that for our \(\log\) identity to hold,

\[

\log{\left(x_1+\ldots{}+x_n\right)}=\log{(x_1\cdot{}\ldots{}\cdot{}x_n)},

\]

which can only happen if \(x_1+\ldots{}+x_n=x_1\cdot{}\ldots{}\cdot{}x_n\).

This also reveals a rather simple way to generate solutions if we don’t care what value of \(n\) we use. Take for example \(\{2,5\}\). While this set clearly doesn’t work since \(2\cdot{}5\neq{}2+5\), we can pad the set with ones until it does:

\[1\cdot{}1\cdot{}1\cdot{}2\cdot{}5=1+1+1+2+5.\]

That is, the set \(\{1,1,1,2,5\}\) works. Matt referred to these sets (those that use the number one) as the trivial solutions. As we will see later, finding all the trivial solutions is far from trivial, and is actually connected to an unsolved problem in number theory. However before getting on to that, lets deal with the non-trivial solutions.

The non-trivial solutions, and proof by induction

There is at least one non-trivial solution to our \(\log\) identity, since \(2+2=2\cdot{}2\). But are there any more? In the video Matt conjectured that the answer is no. He also gave a rather unsatisfactory (in my opinion) proof of this claim. A shortcoming that we will now rectify!

Before considering the general case let us first establish that there are no more solutions with only two numbers. This is demonstrated by the above picture, from which we see that if either number is greater than \(2\), then there will be empty squares in the `multiplication grid’. This means that \(x_1\cdot{}x_2>x_1+x_2\). For those who prefer an algebraic proof of this fact, observe that if \(x_1\geq{}2\) and \(x_2>2\), then

\[1>\frac{1}{x_1}+\frac{1}{x_2}=\frac{x_1+x_2}{x_1\cdot{}x_2}\;\Longrightarrow{}\;x_1\cdot{}x_2>x_1+x_2.\]

From either argument we conclude that

\[x_1+x_2\begin{cases}=x_1\cdot{}x_2&\text{if $x_1=x_2=2$,}\\<x_1\cdot{}x_2&\text{otherwise.}\end{cases}\]

This establishes that \(\log{(2+2)}=\log{2}+\log{2}\) is the only (non-trivial) positive integer solution with only two numbers.

To extend this to arbitrary \(n\), let us proceed inductively. Inspired by the above let us assume that

\[x_1+\ldots{}+x_n<x_1\cdot{}\ldots{}\cdot{}x_n.\]

This implies that

\[x_1+\ldots{}+x_n+x_{n+1}<x_1\cdot{}\ldots{}\cdot{}x_n+x_{n+1}.\]

We then notice that the right hand side of the above consists of the sum of two integers, the first of which is greater than two, with the second greater than or equal to two — exactly the situation that we encountered and solved earlier! Therefore

\[x_1\cdot{}\ldots{}\cdot{}x_n+x_{n+1}<\left(x_1\cdot{}\ldots{}\cdot{}x_n\right)\cdot{}x_{n+1}.\]

Putting this all together shows that our assumption implies that

\[x_1+\ldots{}+x_{n+1}<x_1\cdot{}\ldots{}\cdot{}x_{n+1}.\]

To round things off we just need establish the base case \(n=3\). Here we can again reuse the result for two integers:

\[x_1+x_2+x_3\leq{}\underbrace{x_1\cdot{}x_2}_{>2}+x_3<x_1\cdot{}x_2\cdot{}x_3.\]

Therefore by induction \(x_1+\ldots{}+x_n<x_1\cdot{}\ldots{}\cdot{}x_n\) for all \(n\geq{}3\). This means that \(\log{(2+2)}=\log{2}+\log{2}\) is the only (non-trivial) positive integer solution, no matter how many integers we use!

The trivial solutions, and an unsolved problem in number theory

Whilst we saw that solutions involving the number \(1\) can always be obtained by padding out a product, this doesn’t actually help us to resolve the puzzle. In fact, since in advance we don’t know how many ones will be required, it doesn’t even guarantee that for every \(n\) there will be a solution! As we will now see, given any \(n\) there always exist a finite number of solutions, though it is an open problem as to whether there is always more than one for \(n>444\).

Interestingly when researching this article I found that some serious mathematicians had looked into this problem, albeit mainly in a recreational setting. The results in this section come from this article by Leo Kurlandchik and Andrzej Nowicki. Do check it out!

Let us first establish that there is at least one solution for every \(n\). This is easily done using the following set:

\[\{\underbrace{1,\ldots{},1}_{n-2\text{ times}},2,n\}.\]

Since the sum and product of the elements of this set equal \(2n\), it solves the \(\log\) identity for any \(n\). It is also relatively easy to establish that the number of solutions for any given \(n\) is finite. To see this suppose that \(x_n\) is the largest integer in the set. It then follows that for this set to solve the \(\log\) identity, it is necessary that for all \(i\leq{}n-1\)

\[x_i\cdot{}x_n\leq{}x_1\cdot{}\ldots{}\cdot{}x_n=x_1+\ldots{}+x_n\leq{}n\cdot{}x_n.\]

This implies that the integers \(x_1,\ldots{},x_{n-1}\) are all less than or equal to \(n\). Since

\[\begin{aligned}x_1\cdot{}\ldots{}\cdot{}x_n&=x_1+\ldots{}+x_n\\

&\!\!\!\Longleftrightarrow{}\\

x_n&=\frac{x_1+\ldots{}+x_{n-1}}{x_1\cdot{}\ldots{}\cdot{}x_{n-1}-1}\end{aligned}\]

this implies that \(x_n\) is also finite, and therefore there can only be finitely many solutions for any \(n\). These upper bounds also mean that we can solve the puzzle for any \(n\) by checking all the sets with integers up to these bounds (though this search can be significantly sped up). The solution up to an impressive \(n=10,000\) can be found as sequence A033178 on the online encyclopedia of integer sequences.

So, we have established that for any \(n\) there is always at least one solution, and the total number is always finite and computable. But when is there only one solution? Examination of sequence A033178 shows that this is rare, occurring only at the exceptional values of \(n\in\{2,3,4,6,24,114,174,444\}\). In fact it is unknown whether \(444\) is the largest value of \(n\) with only one solution, and this is listed as problem D24 in Richard Guy’s Unsolved Problems in Number Theory. The interested reader should consult this article by Michael Ecker, which shows that if a larger \(n\) exists then it must be an integer multiple of 6 that is one larger than a prime.

What about negative integers?

One could argue that we haven’t yet squeezed all the fun out of this problem. After all, why only consider the positive integers, why not allow for the negative integers too? This actually introduces a considerable amount of technical difficulty, since we need to define what we mean by the logarithm of a negative number.

To resolve this we look to complex analysis, and the complex logarithm. In direct analogy to the logarithm, the complex logarithm of a complex number \(z\) is defined to be any complex number \(w\) such that

\[e^w=z.\]

A peculiarity here is that this definition implies that complex numbers actually have infinitely many complex logarithms.

This is illustrated in the above figure which shows that the complex logarithm maps any given complex number \(z\) into a set of complex numbers. These have the same real part, but their imaginary parts differ by integer multiples of \(2\pi\). This causes us a problem because the expression we wish to verify, namely

\[\log{\left(x_1+\ldots{}+x_n\right)}=\log{x_1}+\ldots{}+\log{x_n},\]

is no longer uniquely defined! But why does the complex logarithm have this strange property? The reason can be understood from the polar form of a complex number \(z=re^{i\theta}\). From this we see that the complex number

\[w=\log{}r+i\theta\]

satisfies \(e^w=z\), and therefore \(\log{z}=w\). However the polar representation is not unique! We can add integer multiples of \(2\pi\) to \(\theta\) without changing \(z\). Hence the multiple values of the complex logarithm, all differing in their imaginary parts by integer multiplies of \(2\pi\).

Are there extra solutions using negative integers?

To keep our puzzle well defined, we restrict our attention to the principal value of the complex logarithm. This removes the lack of uniqueness by restricting the imaginary part to lie in the interval \((-\pi,\pi]\):

\[\text{Log}\,z:=\{w:e^{w}=z,-\pi<\text{Im}\,w\leq{}\pi\}.\]

Note that under this definition if \(x\) is a negative integer, then \(\text{Log}\,x=\log{|x|}+i\pi\). So, can we find any new sets of integers \(\{x_1,\ldots{},x_n\}\) such that

\[\text{Log}\,{\left(x_1+\ldots{}+x_n\right)}=\text{Log}\,{x_1}+\ldots{}+\text{Log}\,{x_n}?\]

Unfortunately, the answer is no. To understand why, observe that in order for the above to hold, the imaginary part of the right and left hand sides must be equal. This implies that

- At most one of the integers can be negative.

- If one integer is negative, then

\[x_1+\ldots{}+x_n<0.\]

Let us suppose that the negative integer is \(x_n\). Therefore if these points hold

\[|x_1+\ldots{}+x_n|<|x_n|.\]

However since

\[|x_n|\leq{}|x_1\cdot{}\ldots{}\cdot{}x_n|\]

this prevents the real part of the \(\text{Log}\) identity from holding, hence we get no new solutions.

What about the Gaussian integers?

Having set up all the machinery to deal with complex numbers, it would be a shame to abandon our search now. But to continue we need the notion of a complex integer.

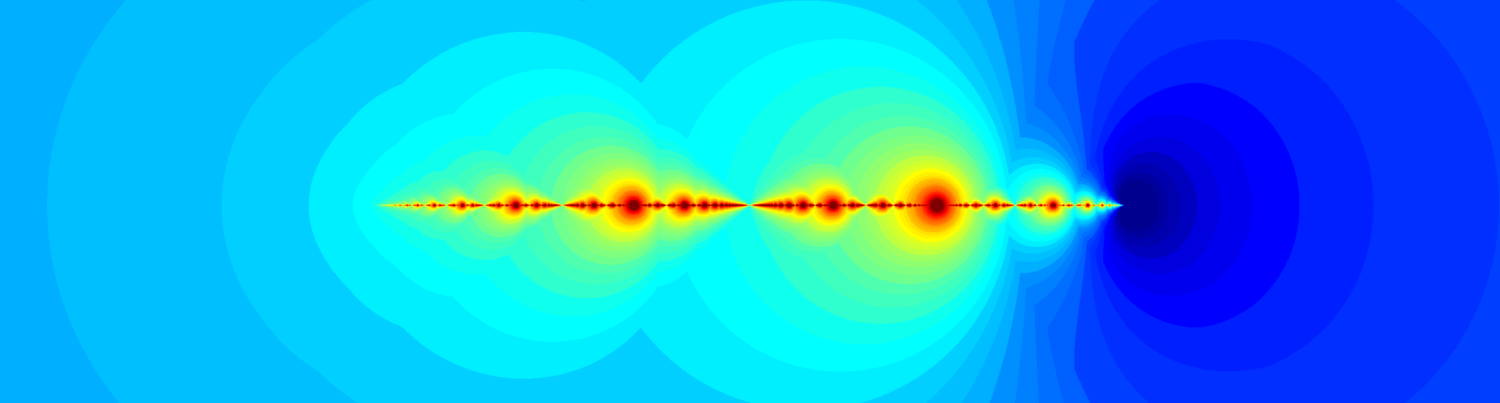

The natural choice here is to use the Gaussian integers. As illustrated above, a Gaussian integer is a complex number whose real and imaginary part are both integers. So does this yield any more solutions to

\[\text{Log}\,{\left(x_1+\ldots{}+x_n\right)}=\text{Log}\,{x_1}+\ldots{}+\text{Log}\,{x_n}?\]

Yes! Finally! The set \(\{1+i,1-i,2\}\) works, as well as the set with four entries

\[\{1+i,1+i,1-i,1-i\}.\]

In fact, unlike in the positive integer case, we get infinitely many solutions for any odd \(n\geq{}3\). This is because \(\{x,i,-i\}\) works for any (non-zero) Gaussian integer \(x\), as demonstrated by the following sneaky use of \(i\):

\[\begin{aligned}

\text{Log}\,\left(x+i+(-i)\right)&=\text{Log}\,x\\

&=\text{Log}\,x+i\frac{\pi}{2}-i\frac{\pi}{2}\\

&=\text{Log}\,x+\text{Log}\,i+\text{Log}\,(-i).

\end{aligned}\]

But do there exist any more that don’t make use of \(1\) or \(i\)? The answer is yes — but there really aren’t many — two to my counting. This essentially follows for exactly the same reason there were so few non-trivial solutions over the positive integers: that the absolute value of the multiplication of numbers grows far faster than that of their sum. But can you prove this? And can you find the missing sets? All the tools you need to answer these questions are hidden in the article!